ASL Annotation Dashboard

Review continuous-video annotations and model outputs in one place.

Open Demo

I'm a computer scientist that's very nerdy about American Sign Language linguistics, especially phonology, lexical semantics, and psycholinguistics.

I'm currently a Postdoctoral Research Associate in the Action and Brain Lab under

Dr. Lorna Quandt, where I focus on building educational technologies for deaf and hard-of-hearing students.

I received my Ph.D. in Computer Science from the University of Southern California in 2025, and my B.S. in Computer Science from Rhodes College in 2019. Click here for my curriculum vitae.

Today, artificial intelligence (AI) technologies are radically transforming the ways we communicate, learn, and work. However, these resources are not accessible to sign language users, including many deaf and hard-of-hearing (DHH) people. Therefore, I seek to collaboratively build technologies that center DHH signers. My prior work has focused on the basic skills associated with , , and American Sign Language. What kinds of knowledge gaps do state-of-the-art AI models have while processing ASL data? How can perspectives, such as theories of and , help close those gaps? What can AI models do to advance our understanding of ASL linguistics, or empower DHH people?

Sign languages are full natural languages with their own internal structure. My research focuses on the linguistic organization of ASL signs, especially how (phonology) and (semantics) are patterned across the lexicon, and how those patterns can be documented, analyzed, and later used in .

In visual-manual modalities, phonemes correspond to articulatory patterns that signal a change in meaning. For example, the prototypical ASL signs for "mother" and "father" are contrasted by location (chin or forehead, respectively). Changes in meaning can also be signaled through visual contrasts in handshape, palm orientation, movement, facial features, and body movements.

The ability to consistently represent these contrasts underpins comprehension and production. My papers at , , and have shown that machine learning models do not adequately learn this skill, but specific training techniques can improve it leading to increased performance on tasks like automatic sign recognition and predicting the meaning of novel signs.

In order to understand the meaning of a single signed word, a number of skills must be available. In the case of fingerspelling, comprehension requires repeated exposure in ASL settings or prior English knowledge.

In the case of iconic or depicting signs, knowledge of the real world and certain ASL conventions, like classifier handshapes, are often required to understand what a signer is referring to.

Additionally, there may be one or more regional variants that do not actually signal a change in meaning despite having a very different form.

I am interested in how machine learning models learn to understand these kinds of signing, and in particular when they break down.

In these situations, what prior knowledge or representational strategies can ameliorate their knowledge gap?

My work on the was a first attempt at capturing some of these complex relationships between form and meaning.

We showed that the ability to label the phonological properties of signs predicts better comprehension skills on never-before-seen signs.

Efforts to model sign language data are constrained by how representative their training data is.

The of isolated signs and their phonemes and the both center deaf and hard-of-hearing signers as well as linguistic models of their phonological and semantic structure.

I use linguistic knowledge in SL technologies to sign structure from video, unfamiliar signs, and compact representations that support broader coverage of the ASL lexicon.

I train models to recover such as handshape, movement, and location directly from video, as in my and work, so higher-level predictions have something structured to build on. I use those visual components to improve sign recognition, especially when signs are rare, visually similar, or produced with variation. I also study compact sign encodings that preserve the parts of a sign, not just its identity, so the model can generalize across related forms instead of memorizing isolated labels.

I use knowledge graphs (Demo) to represent signs as linked bundles of linguistic facts about form, meaning, and lexical structure rather than as isolated labels. I study unseen sign understanding by asking whether a model can estimate what a sign means even when it has never seen that exact sign before, using regularities between form and meaning to guide its predictions. I also use embedding neighborhoods to place related signs near one another in a shared space, so similarity itself becomes a tool for exploration, interpretation, and semantic generalization.

I am interested in models that can produce signs from linguistically-aligned representations, which is the central motivation behind my VQ work. Linguistic alignment has been shown to improve coverage for out-of-vocabulary signs, giving models a way to represent unfamiliar items through reusable structure rather than failing on anything outside the training set.

Review continuous-video annotations and model outputs in one place.

Open Demo

Explore ASL signs as linked facts about form and meaning.

Open Demo

Compare how related signs cluster in learned embedding spaces.

Open Demo

This work studies how signing speed, space, and phonology varies across isolated signs, lecture-type signing, and dialogue signing.

We study how pragmatics shapes ASL articulation in educational STEM settings by collecting a motion-capture dataset spanning instructor-student dialogue, isolated vocabulary, signed lecture, and interpreted articles. Across these contexts, dialogue signs are substantially shorter than isolated productions and show pragmatic adaptations that are absent in monologue settings. We use the dataset to quantify interaction-driven variation and evaluate whether sign embeddings capture STEM signs and the degree to which repeated forms become entrenched over time.

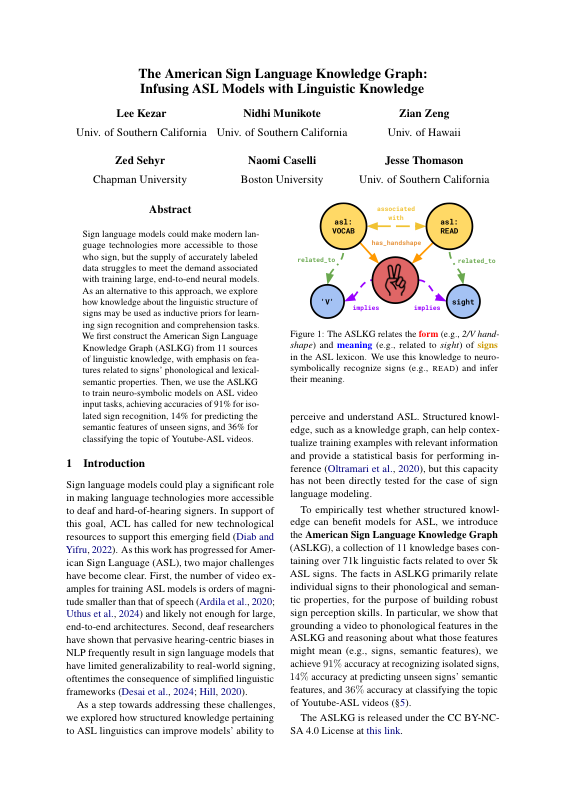

The ASLKG is a linguistic knowledge base for studying lexicon-wide relationships related to form, meaning, and other linguistic properties.

We introduce the American Sign Language Knowledge Graph (ASLKG), a collection of linguistic facts drawn from multiple resources that connects ASL signs to phonological and semantic properties. The graph serves as an inductive prior for neurosymbolic ASL models, improving isolated sign recognition while also supporting prediction of unseen semantic features and topic classification for Youtube-ASL videos. This work argues that structured linguistic knowledge can help ASL systems both recognize forms and reason about meaning.

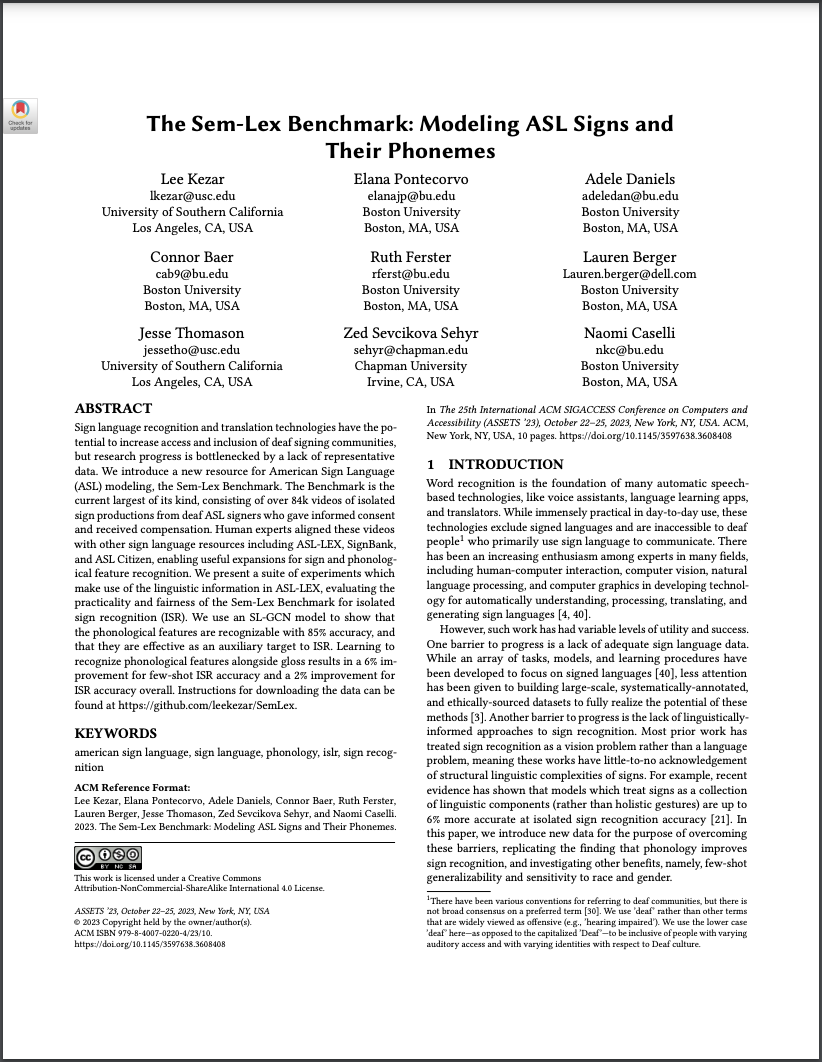

The Sem-Lex Benchmark provides 91,000 isolated sign videos for the tasks of recognizing signs and 16 phonological feature types.

Sign language and translation technologies have the potential to increase access and inclusion of deaf signing communities, but research progress is bottlenecked by a lack of representative data. We introduce a new resource for American Sign Language (ASL) modeling, the Sem-Lex Benchmark. The Benchmark is the current largest of its kind, consisting of over 84k videos of isolated sign productions from deaf ASL signers who gave informed consent and received compensation. Human experts aligned these videos with other sign language resources including ASL-LEX, SignBank, and ASL Citizen, enabling useful expansions for sign and . We present a suite of experiments which make use of the linguistic information in ASL-LEX, evaluating the practicality and fairness of the Sem-Lex Benchmark for isolated sign recognition (ISR). We use an SL-GCN model to show that the phonological features are recognizable with 85% accuracy, and that they are efective as an auxiliary target to ISR. Learning to recognize phonological features alongside gloss results in a 6% improvement for few-shot ISR accuracy and a 2% improvement for ISR accuracy overall. Instructions for downloading the data can be found at https://github.com/leekezar/SemLex.

We train SL-GCN models to recognize 16 phonological feature types (like handshape and location), achieving 75-90% accuracy.

Like speech, signs are composed of discrete, recombinable features called . Prior work shows that models which can are better at , motivating deeper exploration into strategies for modeling sign language phonemes. In this work, we learn graph convolution networks to recognize the sixteen phoneme"types"found in ASL-LEX 2.0. Specifically, we explore how learning strategies like multi-task and curriculum learning can leverage mutually useful information between phoneme types to facilitate better modeling of sign language phonemes. Results on the Sem-Lex Benchmark show that curriculum learning yields an average accuracy of 87% across all phoneme types, outperforming fine-tuning and multi-task strategies for most phoneme types.

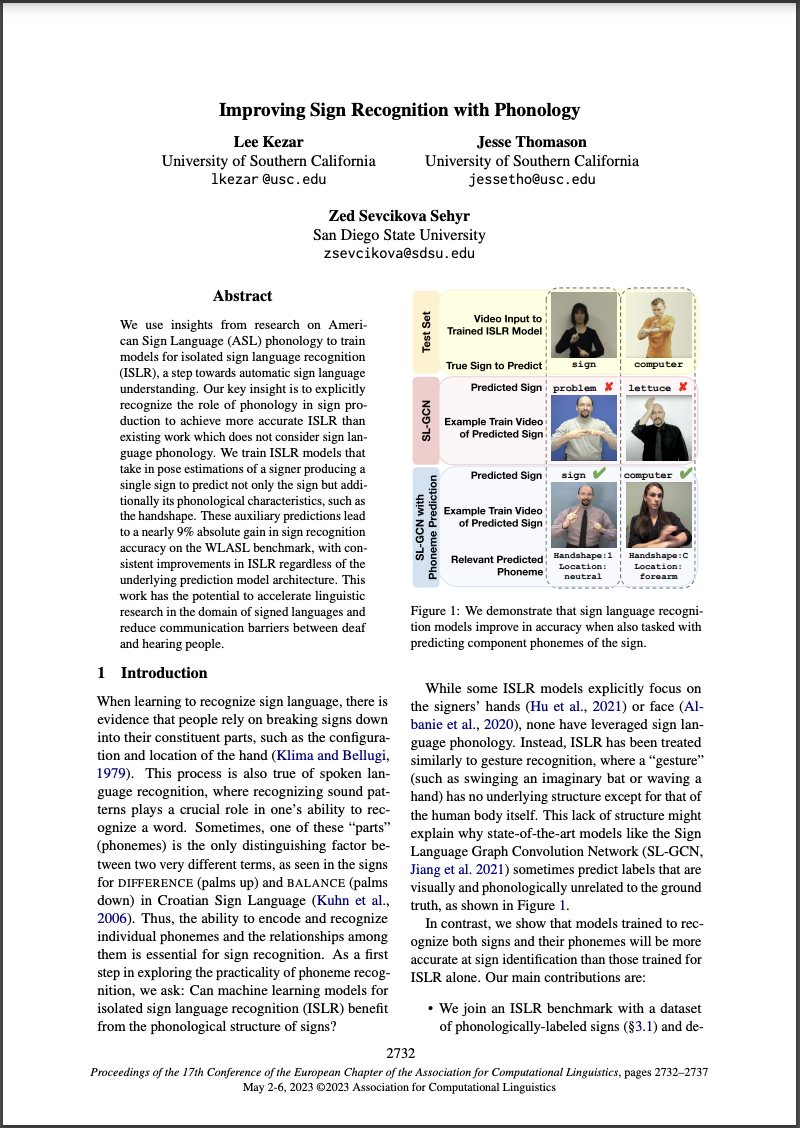

We show that adding phonological targets boosts sign recognition accuracy by ~9%!

We use insights from research on American Sign Language (ASL) to train models for (ISLR), a step towards automatic sign language . Our key insight is to explicitly recognize the role of phonology in sign production to achieve more accurate ISLR than existing work which does not consider sign language phonology. We train ISLR models that take in pose estimations of a signer producing a single sign to predict not only the sign but additionally its phonological characteristics, such as the handshape. These auxiliary predictions lead to a nearly 9% absolute gain in sign recognition accuracy on the WLASL benchmark, with consistent improvements in ISLR regardless of the underlying prediction model architecture. This work has the potential to accelerate linguistic research in the domain of signed languages and reduce communication barriers between deaf and hearing people.

We classify sections in scholarly documents according to their pragmatic intent and study the differences between 19 disciplines.

Scholarly documents have a great degree of variation, both in terms of content (semantics) and structure (pragmatics). Prior work in scholarly document understanding emphasizes semantics through document summarization and corpus topic modeling but tends to omit pragmatics such as document organization and flow. Using a corpus of scholarly documents across 19 disciplines and state-of-the-art language modeling techniques, we learn a fixed set of domain-agnostic descriptors for document sections and “retrofit” the corpus to these descriptors (also referred to as “normalization”). Then, we analyze the position and ordering of these descriptors across documents to understand the relationship between discipline and structure. We report within-discipline structural archetypes, variability, and between-discipline comparisons, supporting the hypothesis that scholarly communities, despite their size, diversity, and breadth, share similar avenues for expressing their work. Our findings lay the foundation for future work in assessing research quality, domain style transfer, and further pragmatic analysis.